Last Updated on by Azib Yaqoob

Want to share Google Search Console Access to an SEO manager, SEO staff, SEO team or marketing staff? This tutorial will get you covered.

Most of my small business SEO clients have a hard time finding this information, so that’s why I am writing this tutorial so people can easily give or revoke access to their Google Search Console account whoever they wish.

Why Should You Use Google Search Console?

If you are not already using Google Search Console, it could be one of the big SEO mistakes that you should avoid. Why? Because it is the most useful free SEO tool to give you insights of how a website actually performs in Google search.

Key benefits of using Google Search Console:

- You can check crawling errors of a website.

- Find which websites are giving you dofollow backlinks.

- Monitor Search queries on which your website is showing up in Google.

- find out how your website is performing on mobile device and desktop computer.

- Submit sitemap to Google to index all the pages on Google.

How to Give Google Search Console Access to an SEO Manager?

First of all, you need a verified property in Google Search Console.

After getting your website property verified, please follow this tutorial:

Log into your Google Search Console Account.

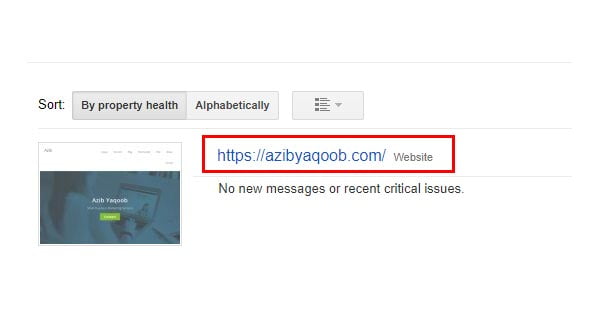

Select a property which you want to share with SEO manager from your Search Console Dashboard.

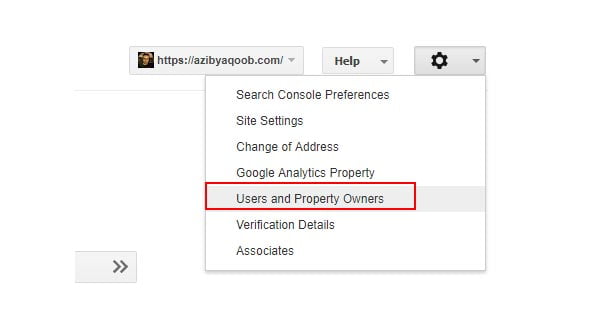

Now you will see a setting gear icon at the top right corner of your property dashboard. Clicking on it will open a new menu.

Here, you need to select the option ‘Users and Property Owners’.

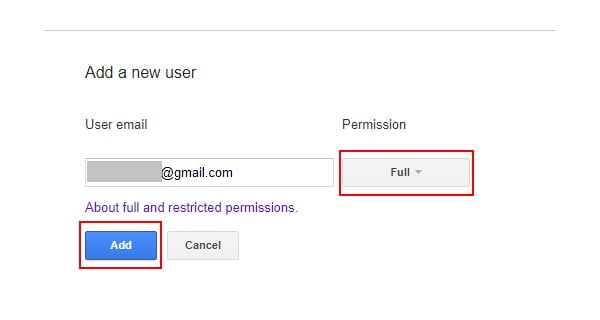

It will open up a new page in your Google Search Console account. When you look at the top right corner, you will find a red button which will be saying something like ‘ADD A NEW USER’. Clicking on this option will open up a new window, where you can add your SEO manager’s Email address.

After giving your SEO manager’s email address, next to it is a permission box. You can give restricted access or full access through this option.

- Full user Has view rights to most data, and can take some actions.

- Restricted user Has simple view rights on most data.

You can give full access, as your SEO manager may have to make some changes to your property such as adding sitemap, fixing and finding related SEO errors.

After selecting the permission, click on ‘Add’. This new user will be added as a user to your Google Search Console property. He will get a confirmation email from Google to access this new property.

What to do when the job is complete and now you want to remove this user from your property? Here’s what you need to do.

How to Remove a User Accessing Google Search Console Property?

You can remove a user from Google Search Console similarly. Login to your Google Search Console Account. Select the property from where you want to remove a user.

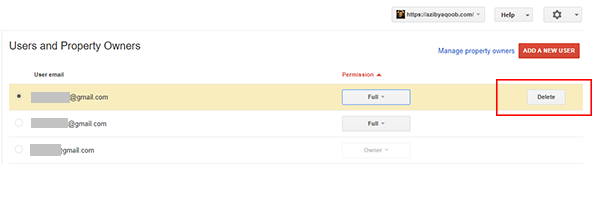

You will find the settings gear icon at the top right corner of property dashboard. Clicking on it will bring a new menu. From this menu, select ‘Users and Property Owners’.

Now, select any user who you want to delete from accessing this property. Clicking on the username will show you an option of deleting this user if you look at the right corner of the username.

Pressing the delete button will open a confirmation box. By pressing ‘ok’ button will remove this user from accessing this Google Search Console property.

I hope this tutorial helped you giving and revoking access to your SEO Manager from Google Search Console account. If you like this post please feel free to join me on Twitter or subscribe to my newsletter.